Pokemon GO. Harry Potter: Wizards Unite. Snapchat. These applications brought Augmented Reality (AR) into the limelight in recent years. The technology has truly enhanced the way we interact with our world, making it a more fun, engaging, and vibrant place to live in.

While it may seem like this technology is a recent invention, you’ll be amazed to know that AR actually has its roots way back in the 1800s.

So sit tight as I attempt to unravel this 200-year-old concept in 3 minutes.

What is AR?

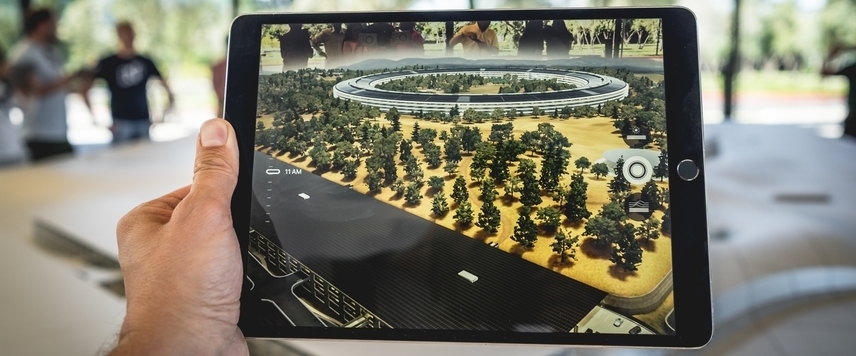

The Merriam-Webster dictionary defines AR as an enhanced version of reality created by using technology to overlay digital information onto live camera feed.

In simpler terms, AR allows digital content to look like it is part of the physical world. This differs from Virtual Reality (VR) which transports users into a completely digital world.

Image source: Unsplash

Image source: Unsplash

So, how does AR work?

Every AR system comprises 3 components - hardware, software, and the application. For explanation purposes, I will use the example of our smartphones to expound the concept.

1) Hardware

The hardware refers to your equipment through which the virtual images are projected. In this case, they are your smartphones. In order for AR to work on these devices, they must have sensors and processors that can support the high demands of AR. Here are some of the key hardware components:

Processor: This is the brain of the device. It determines the speed of your phone and whether it is able to manage the heavy AR requirements, in addition to its normal phone functions.

Graphic Processing Unit (GPU): The GPU handles the visual rendering of a phone’s display. AR requires high performance GPUs so that the digital content can be created and superimposed seamlessly.

Sensors: This is the component that gives your device the ability to make sense of its environment. Common sensors required for AR include:

- Depth Sensor: To measure depth and distance

- Gyroscope: To detect the angle and position of your phone

- Proximity Sensor: To measure how close and far something is

- Accelerometer: To detect changes in velocity, movement, and rotation

- Light Sensor: To measure light intensity and brightness

These hardware specifications are crucial for AR to function properly on the devices. It is is one of the reasons why only the later generations of mobile phones have AR capabilities.

2) Software

The second component in an AR system is the software, and this is where the AR magic begins. ARKit (Apple) and ARCore (Android) are some examples of AR software. These programs have 3 fundamental technologies that enable them to build augmented reality experiences.

Environment understanding: This allows your phone to detect prominent feature points and flat surfaces to map its surroundings out. In doing so, the system can then place virtual objects accurately on these surfaces.

Motion Tracking: This lets your phone determine its position relative to its environment. Virtual objects can then be planted on their designated spots on the image.

Light estimation: This gives your phone the ability to perceive the environment’s current lighting condition. Virtual objects can then be placed in the same lighting conditions to enhance realism.

Notice how the hardware and software work hand in hand. If the hardware does not possess the sensors to measure light intensity, the software’s light estimation capabilities will be of no use.

3) Application

Image source: Pixabay

The last component of an AR system is the application itself.

It is important to be clear that the software allows AR applications to run on your smartphone but does not possess the AR features. The AR features such as the 3D objects and filters come from the mobile applications themselves.

Applications such as Snapchat, Pokemon GO, and IKEA Place have their own database of virtual images and triggering logic. These applications pull virtual images from their database and map them out onto the live images.

Working principles of AR

There are generally 2 ways that applications trigger AR features - Marker-based tracking and Marker-less tracking.

Marker-based tracking: This mode requires optical markers, such as QR codes, to trigger the AR features. By pointing your mobile camera at one of these barcodes, the application recognises it and superimposes the digital image on the screen.

Marker-less tracking: This mode is premised on object recognition. AR applications that work based on marker-less tracking are triggered when they recognise certain real-world features. In the case of Snapchat, that real-world object is your face.

AR Implementations In The Marketing World

The applications for AR in the marketing world is aplenty. Imagine a world where pointing your camera at a brick and mortar restaurant shows you the reviews of the food there. Or giving people special discount codes when they use their AR lenses to look at your shop. Not only is this a more interactive means of engaging your customers, it is also a faster method (compared to Google search) of finding information specific to you.

AR is a powerful tool when used wisely. Here are some ingenious applications of AR in marketing that companies have done.

Burger King - using AR to burn competitors’ advertisements.

Ready Player One Movie - Interactive movie posters.

Timberland - using AR to allow people to see how they look like in their apparels quickly.

So that’s AR in a nutshell. Next time someone asks you how AR works, you’ll be ready to answer them.

Should AR be part of your marketing mix? Let our strategy team help you make that assessment.